In an earlier article we wrote about 1 particular technique to tempt a bot in order to know that it’s a bad bot. This approach is elaborate, but it works.

Every bot has their own particular character, based on their desired end result. The MouseTrap solution helps us target one particular type of bot, but it certainly wont catch them all.

So we need a few more ways to catch them, and the simplest way to do this is to use signals.

What exactly are Bots?

Before we can talk about Bots and their characteristics, we need to first get clear on what a bot is. Here’s one approach at defining it:

A bot is a software application that is programmed to do certain tasks. Bots are automated, which means they run according to their instructions without a human user needing to start them up. Bots often imitate or replace a human user’s behavior. Typically they do repetitive tasks, and they can do them much faster than human users could.

CloudFlare.

For our purposes, we’re most interested in malicious bots – those bots that exhibit misleading behaviour , violate our terms of service, or attempt to gain access where they have no permission, or business, doing so.

What are Bot Signals?

Bot Signals are indicators that a visitor is a bot. It’s not proof.

When driving, we use indicators to tell everyone we’re about to turn. It’s a signal.

If you’ve ever driven a car, you know that the indicator of the car in front isn’t always right. Sure, the car will probably turn, but experience has taught you that it isn’t always the case.

The opposite is true, too. You haven’t been driving long if you’ve never had a car suddenly make a turn without any notice.

The point is, signals are just that – indicators, but not conclusive proof.

It’s with signals that we can detect bots and eventually block them. Just as with cars, 1 indicator alone is not usually enough to draw firm conclusions, but they point us in the right direction if we get enough of them.

What are some Bot Signals that we can use?

Here are a just a few heuristics we can use to identify bots; some being more definitive than others.

#1 Failed WordPress Logins

If you get a failed WordPress login, this may indicate a bot, or it may be a user who’s forgotten their password.

But if you get 20 failed logins in succession, chances are high it’s a bot.

#2 Failed WordPress Logins using an non-existent username

Just like a failed login, this may indicate a bot’s attempt to login. Since it used a non-existent username, chances are higher that it’s a bot, but it’s not 100%.

What if a user just typed in their username incorrectly?

#3 XML-RPC Access

XML-RPC isn’t going anywhere any time soon so your site likely gets pinged with legitimate XML-RPC requests even though you have no use for it.

But it’s also a tempting place for bots to hit-up and so repeated requests at your XML-RPC endpoint is likely a bot too.

#4 Accessing Non-Existent Pages/Assets – 404 Errors

This is a tricky one. If you haven’t been keeping your site’s URLs and links in-sync, you could well have legitimate 404 links on your site which normal users are going to click.

404s are definitely best considered a “signal” and not a definitive bot request. But, if you’re absolutely sure you’re on top of your broken links and you’re handling everything properly, you could treat it as more than just a signal.

#5 Fake Web Crawlers

Fake-what-now? Picture the Google web crawler for a moment – the bot that scans your website for search engine results. How do we know that this particular bot is an official Google bot?

Because it tells us it is, through the User Agent ID.

When browsing to a web page, your browser will send along a piece of text that gives the web server details of the browser you’re using. For example, that it’s Google Chrome, the version is 73.0.3686, it’s 64-bit, etc. This is the User Agent ID.

The Google web crawler does the same thing, and it usually has the text ‘Googlebot‘ in there somewhere, or something similar.

But what’s to stop all bots throwing in Googlebot into its User Agent string and making us think it’s a Google web crawler?

Absolutely nothing, of course. And they do.

What can we do about that? Well it turns out there are ways to confirm whether a bot is really a Google bot, or not. Shield Security has been doing this for a long time already.

And because we can confirm whether a bot is real-Google or fake-Google, we can now use this as a ‘bad bot’ signal. We can confidently state that a fake-Google user agent is a bad bot, masquerading as a good one.

#6 Empty User Agents

We discussed what User Agents are, above. All normal traffic by people have user agents. But some bots can be sloppy and neglect to include a user agent into their requests. This suggests that perhaps they’re bot.

Care needs to be taken with this setting as not all webhosts are properly configured to populate the user agent request value in PHP. This can make it appear that there’s no User Agent sent with a request, when there is. You’ll need to test this on your hosting platform to ensure that you can use this signal.

#7 Bot MouseTrap

Using the fake link strategy outlined in more detail in this article.

#8 More…

Shield has had many other bot detection systems for years, such as comment spam detection and bot logins. There will always be more signals we can draw upon and these will come in time.

How can we start using these Bot Signals to protect our sites?

Some signals are already within Shield Security and have been for a long time (see #8 above). But we’ve added signals #1-#7 described above into the next major release of Shield Pro.

Our latest Shield Security (7.3) will be out in the next week or so.

All of the signals outlined above will be available for all Shield Pro customers, while the ‘Failed WordPress Login’ signal will also be made available for free users.

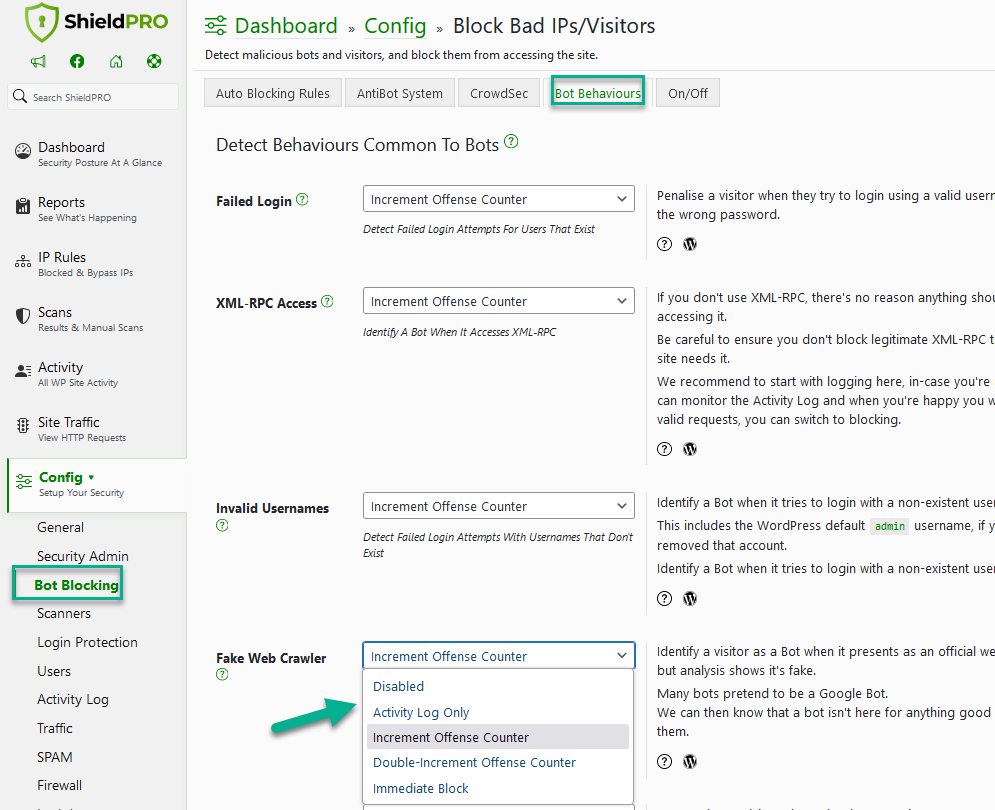

You’ll be able to configure each of these signals independently from each other and you’ll also be able to decide how you want Shield to respond. You’ll have 4 options to choose from:

- Activity Log Only. This option lets you see the activity of these bots on the activity log before applying any transgressions or blocks to offenders. It’ll let you test-drive the signal before making it take effect.

- Increment Offense Counter (by 1). This option puts another black mark against an IP. As always with the transgression system, once the limit is reached for an IP address, it is blocked from accessing the site.

- Double-Increment Offense Counter (by 2). We’ve added the ability to give weight to certain behaviours. By allowing the transgression counter to increment by 2, the IP will reach the limit more quickly, and be blocked sooner.

- Immediate Block. If you decide that a particular signal on your site is severe enough, you can have Shield immediately mark that IP as blocked.

Take a Test Drive with the Activity Log option

Any good security configuration needs time to tweak and adjust to ensure it works for you and your particular sites.

This is why each of these signals has the option to “Activity Log” only, letting you see what’s happening before you apply any security measures against offenders.

We strongly recommend using the logging option if you’re unsure of any adverse effects on your normal visitors.

Questions and Comments

There’s a lot to take in here and you’ll want to take your time to dig into this.

Please do leave us questions below about any of the topics raised here.

Hello dear reader!

If you want to level-up your WordPress security with ShieldPRO, click to get started today. (risk-free, with our no-quibble 14-day satisfaction promise!)

You'll get all PRO features, including AI Malware Scanning, WP Config File Protection, Plugin and Theme File Guard, import/export, exclusive customer support, and much, much more.

We'd be honoured to have you as a member, and look forward to serving you during your journey towards powerful, WordPress security.